From isolated alerts to contextual intelligence: Agentic maritime anomaly analysis with generative AI

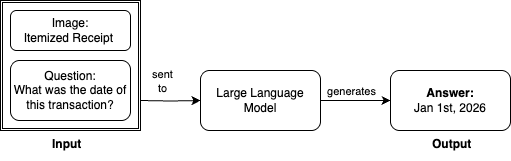

This blog post demonstrates how Windward helps enhance and accelerate alert investigation processes by combining geospatial intelligence with generative AI, enabling analysts to focus on decision-making rather than data collection.